Executive Summary

- Lumia Labs analyzed Google’s Antigravity and discovered three security issues, which were responsibly disclosed.

- We publish our findings now, after the last issue was patched and mitigated.

- Two other issues remain unpatched, as Google did not consider them to be crossing a security boundary.

Introduction

Google’s Antigravity is a relatively new IDE, touted as a “next generation IDE” and “agentic development platform”. When it was released, it was the first IDE to incorporate a built-in browser, CLI and development UI. But with more features, comes a greater attack surface, which means more opportunities for attackers.

In this blog post, we’ll share our thought process when looking at a new application and attack surface, as well as our findings from the initial research and disclosures.

AIKatz Strikes Again

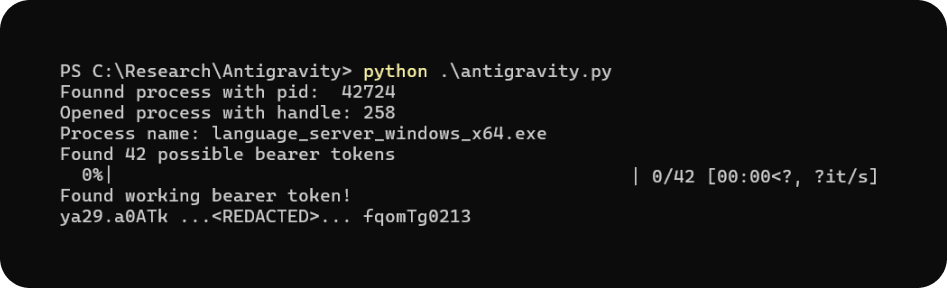

Back when we did our initial AIKatz research, we looked for exposed authentication tokens in AI desktop applications and demonstrated the attack against all major vendors - ChatGPT from OpenAI, Claude from Anthropic and M365 Copilot from Microsoft. At the time, we were only missing an application from Google, since Gemini only has the browser chatbot and not a desktop application. Of course, we could apply AIKatz to the browser, but it’s much less likely to actually siphon a useful authentication token from the myriad of data in the browser. With the release of Antigravity, we could finally complete the set.

The difference between Antigravity and other desktop applications is that Antigravity’s VSCode UI is mainly for user inputs, and does not handle LLM logic. Instead, it spawns another process, called language_server, that is responsible for that part of the logic. The language server process and the Antigravity process communicate between themselves using the same authentication methods the language server needs to access the LLM backend. This is the same kind of infrastructure as in Windsurf, and in fact a lot of the internal naming inside the language server also alludes to Cascade, Windsurf’s coding agent. Maybe it’s related to Google’s acquihire?

Despite Google dismissing our disclosure, as their policy is to ignore local only attacks, we still think it warrants attention. Consider the following scenario: an attacker harvesting the authentication token and communicating with the language server as if they’re the user, causing the language server to delete or encrypt files on the system. To the EDR, all actions will come from the language server process, hindering detection of the attacker’s malicious process.

Listen and You Shall Receive

Now that we’ve exhausted the known attack surfaces, it’s time to look at some new stuff, and this time, Google didn’t dismiss the disclosure.

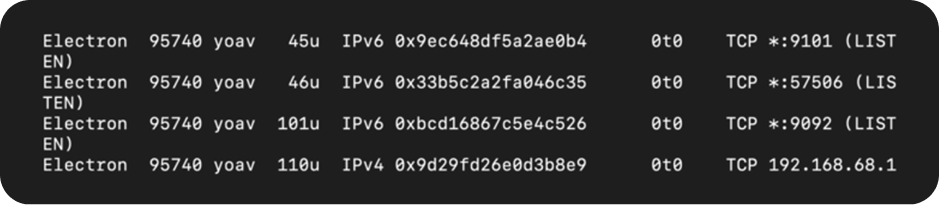

Given that Antigravity has two components (The VSCode based Antigravity executable and the language server) they have to communicate with each somehow. So we took a look into which ports the processes listen on.

On Windows, we saw a few ports being listened on, and with interface 0.0.0.0 no less!

This means that any user on the machine can communicate with them (for a terminal server kind of deployment), but also that other users in the network can communicate with those processes. Luckily for most users, there isn’t a lot going on behind those ports, so them being exposed isn’t a big issue.

On Mac, however, it’s a different story.

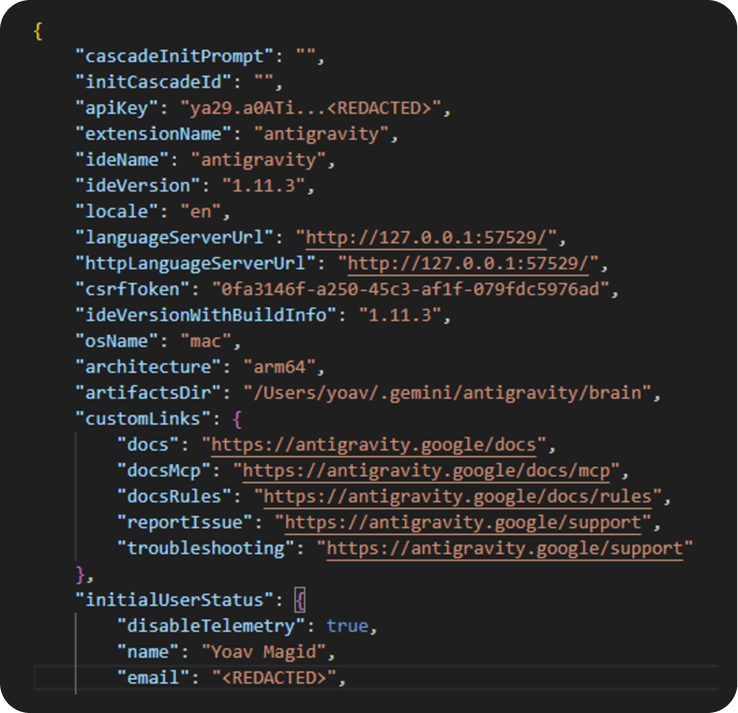

What’s this? There’s a new port here that we didn’t see on Windows! Turns out, it’s a port that exposes the conversation panel of the Electron app in its own HTML page. It also gets the 0.0.0.0 treatment, meaning it’s accessible from the network, as you can see from Figure 4, accessing it via a mobile device.

This webUI and port was most likely an oversight, as the submit button triggered a POST request to localhost, so network access didn’t really work or do anything. The main issue with the webUI was that its HTML contained a JSON with the user’s information, including their authentication token to the LLM backend.

Using the authentication tokens in this structure, we could communicate with the LLM backend and the language server just like we did with the AIKatz attack, but in this case the token leak happened from the network. This time Google didn’t dismiss our disclosure, and we were awarded a bounty for this. Although no CVE number was assigned. We were notified a fix for this was deployed last month (January 2026).

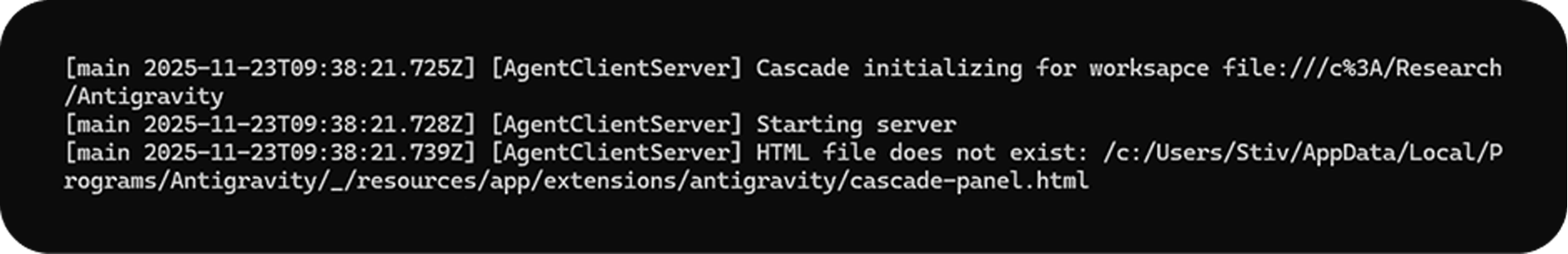

Now why didn’t we see that port on Windows? It doesn’t seem to be a component that is OS specific. Funny you ask, it’s a bug… The server component responsible for the webUI (called AgentClientServer internally) tries to build its resource components paths dynamically, and for some reason prepended a ‘/’ to the actual HTML path, causing it to fail finding it and fail to start.

We reported this to Google as well (as a regular bug, not security issue), but were dismissed and referred to StackOverflow. All the talks about webservers, Javascript and HTML must’ve confused them to think we were talking about our own code, and not their internal components…

Conclusion

Our research into Google's Antigravity IDE highlights the inherent security challenges that come with new and feature-rich products, especially those in the AI field. This is further exacerbated by the fact that AI-led development is used more and more, to hasten development cycles often at the cost of security.

In our research, we successfully applied an AIKatz-style attack to Antigravity, harvesting sensitive authentication tokens from its processes’ memory. Then, we took that one step further and managed to extract those same tokens from an exposed interface and port in the macOS version.

Ultimately, these findings underscore the volatile nature of products in the AI fields, which typically sees very fast development times and a constant stream of new releases and innovations. The attacks we found can not only affect Antigravity’s process, but also allow threat actors to interact with the LLM in the name of the victim, and affect how the LLM behaves and interacts with the victim’s machine, thus achieving long-reaching effects.

For defenders, there isn’t a sure-fire way to detect the attacks discussed in this blog. Since the attacks are local only or require a simple HTTP request, there aren’t a lot of signals that can be detected. Instead, LLM and AI governance is an effective way to reduce the potential impact of such attacks, regardless of how the authentication tokens were extracted, since abusing them still requires that the attacker reach out to the LLM backend.

Lumia Security monitors such communication and will alert on suspicious behavior detected through our platform.

Contact Us to learn more.